A/B Testing (also known as bucket testing or split testing) can help you test different message variations in order to understand what types of communication resonate most with your audience.

Setting a Hypothesis

When approaching A/B testing it’s best to start by formulating a hypothesis. Consider a goal that you’d like to achieve or something important that you are looking to learn. Should the messaging be funny or more practical? Are you looking to educate your audience or promote something specific to them? It’s helpful to remember your overall business objectives when coming up with a hypothesis — are you aiming to increase traffic, generate leads, drive conversions, or boost revenue? Choose an A/B test objective and variables that will align well with your overarching goals.

What Variables Should You Test with A/B Testing?

Determining what variables to A/B test is often the hardest decision to make, but here are a few options to help you get started:

Message Wording

Same same, but different. Test whether your subscribers respond better to slight changes in message titles, or whether they prefer short, punchy phrases or longer sentences.

Call-to-Action

Providing a clear call-to-action can urge subscribers to take your desired actions. For example, “Sit back & relax. Save 10 percent on all shake orders this weekend only. Tap to redeem.”

Action buttons, which allow more than one action to be taken on a notification, are great for call to action A/B testing. You can specifically test different CTA text to see what variation earns a higher click-through rate (CTR) or conversion rate. Some additional CTA examples include: grab now, click here, check offer, click to redeem, and click now.

Humor

Companies sometimes shy away from sending funny notifications and opt to keep things more formal in an effort to be professional. The truth is, a serious tone isn’t the right approach for every brand. Humor can be a meaningful way to capture attention and connect with followers. It can also work great with the right images.

Tinder, a popular dating app, sent a cheeky notification that read “A new person is giving you a chance! Probably not gonna work out, though.” This message fits their brand identity, helps create strong brand connections, and drive users to open their app.

Urgency

Letting your users know exactly when a promotion ends (as opposed to just highlighting the value of the promotion) can give your message more urgency. For example, consider the following two message variations:

Variation 1: “Only 10 more hours to save $10 on your pizza order”

Variation 2: “You’ve still got time to enjoy $10 off your next cheese pizza!”

The first message may have a higher click-through rate because it generates a sense of urgency.

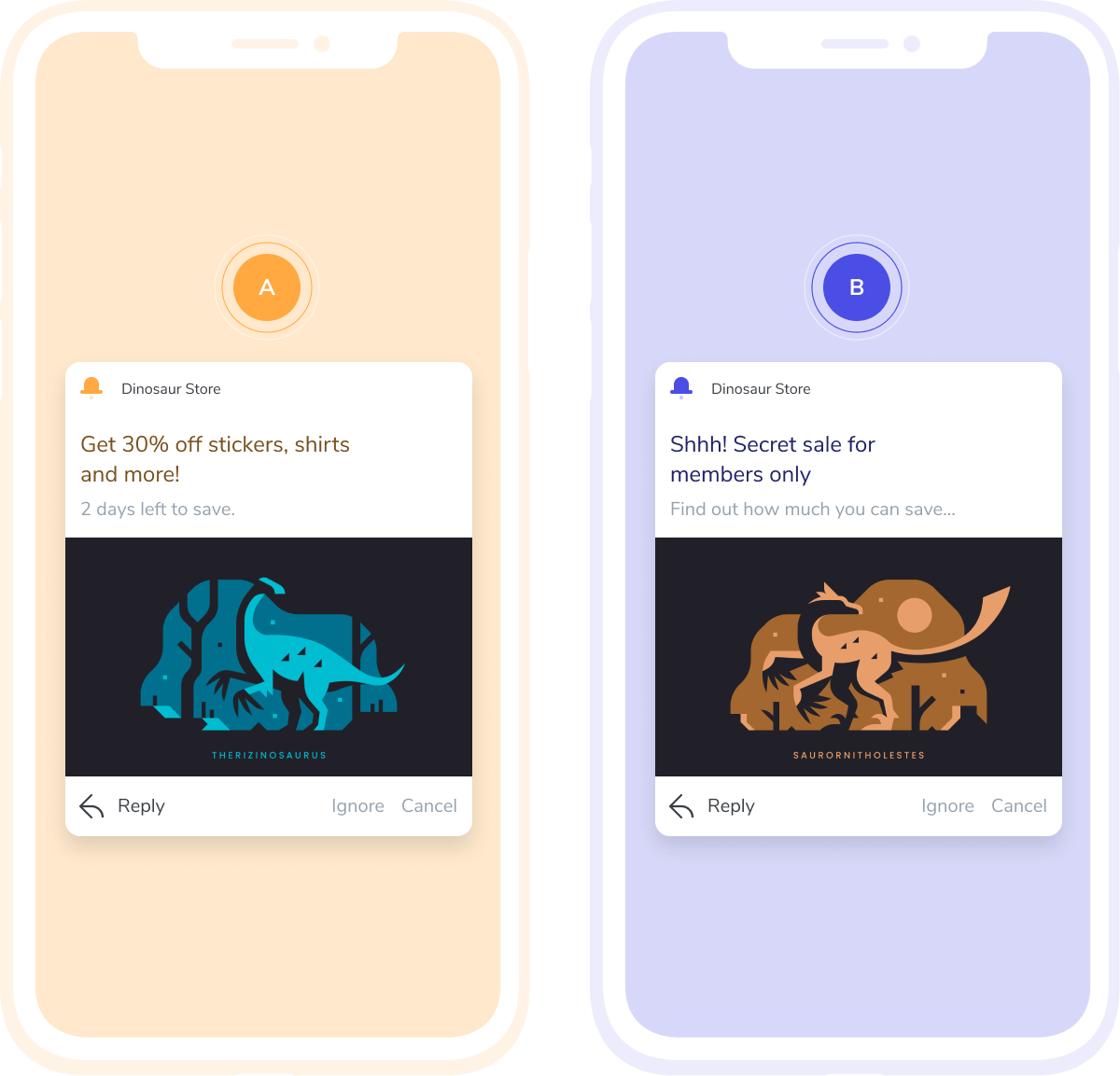

Images

You can keep the notification message copy the same, but use different images in each notification to determine if images have an impact on notification performance. Use different size images, different product icons, or different header images. Even keeping the message copy the same, but simply sending one notification with an image and one without can yield valuable information.

GIFs

Notifications with rich media have been found to generate a 25 percent increase in engagement. Similar to images, test different GIFs or include one message with a GIF and one without. It is important to consider that GIFs may not be appropriate with every notification, but A/B tests can help you determine when they are effective and when they aren’t.

Emojis

The presence of emojis or the usage of different emojis make great A/B tests. A HubSpot study found that push notifications with emojis saw an 85 percent increase in open rates. Set up an emoji A/B test to see if this statistics is accurate for your notifications as well.

Timing

This type of test will help you figure out the best time of day to send push notifications — or even the best days of the week, season, or months. While keeping the message content the same, you can A/B test how message timing impacts engagement behavior by sending them at different times. Within OneSignal, you can use the intelligent delivery feature to optimize message timing and compare the results to messages sent at a specific time.

Location

Grouping your users by city, state, and/or country can be helpful to try honing your promotional strategy around specific events. For example, the Super Bowl happening in specific US cities yearly, can allow you to test relevant events happening in those cities and targeted messages around those events.

Geolocation notifications are also perfect for A/B testing. For example, you can test whether a five-mile or 10-mile targeting radius is more effective. This can be particularly useful when customers are near your stores or restaurants to identify whether a geolocation notification helped drive them to a specific location.

A/B Testing Pitfalls and Best Practices

Test One Variable at a Time

While there are a lot of potential variables to test, it’s important to remember to test one variable at a time. If you test images at the same time as humor, it will be difficult to determine which variable contributed to the increase in engagement. Keeping things simple will produce clearer results.

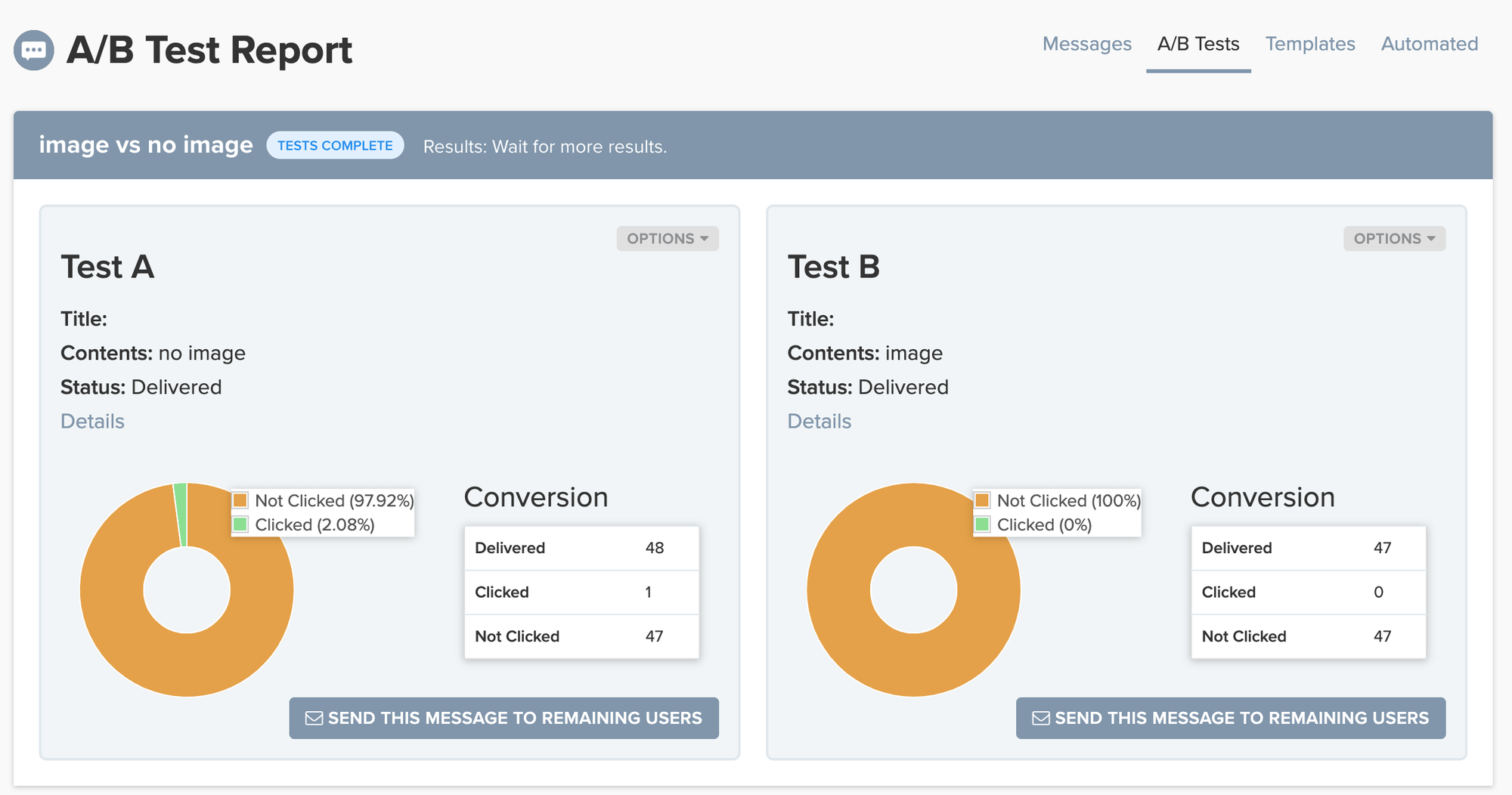

Be Thoughtful When Interpreting Your Results

When reviewing the results of an A/B test, be thoughtful about how you interpret them. Think back to your hypothesis to contextualize results. A variant could clearly have a higher click-through rate, but you may notice that revenue did not actually increase. Does this result align with your business goals? You could also see a difference in the open rate versus the conversion rate. It’s important to consider the bigger picture when reviewing test results.

Another thoughtful consideration to keep in mind is your test sample size. A small sample size can end an A/B test too soon and therefore not yield definitive or accurate results. To conduct a relevant A/B test, you need to have a decently large target list of at least 1,000 contacts.

Iteration is also key — do more than one test! The more you test, the more conclusive the results will be. Additionally, a recent A/B test “winner” is often a perfect starting point for your next A/B test. The impact of further segmenting your list or incorporating message personalization into the message copy are other angles of iteration that can provide more specific and valuable results.

For example, imagine you’ve A/B testing how a notification performs with an image versus without an image. Your test reveals that the notification with an image yields a 15 percent higher click-through rate. For a follow-up A/B test, you can send separate notifications with the same “winning” image, but add message personalization to one only to see if that can further increase the CTR. Continue to re-test and iterate to further understand the results and gain additional insights.

Start Testing Your Notifications to Improve Engagement

A/B testing notifications can help guide your communication strategy and provide insights that can be replicated in other campaigns. You don't need any experience to get started — all you need to do is create the test and learn from the results. To learn how to create your first A/B test in your OneSignal, check out our step-by-step A/B testing instructions below and login to your account to get started.

View our A/B Testing Guide